AI Agent Observability: How to Monitor AI Agent Systems for Business

Launching an AI Agent into business processes is like hiring a star employee who never reports their progress. When AI automatically makes decisions instead of just answering questions, the system becomes a "black box" of potential risk. You don't know how many resources the AI is consuming, which tools it’s using, or why it made a wrong decision. This article helps you solve that problem by establishing a transparent monitoring system, transforming risks into competitive advantages.

Key Takeaways

- Overcoming the AI "Black Box": Understand why traditional Application Performance Monitoring (APM) tools fail against the autonomous nature of AI Agents and the urgent need for specialized Observability systems.

- 3 Core Risks of Lacking Monitoring: Identify consequences ranging from lack of audit logs (legal violations) to operational debugging difficulties and the erosion of customer trust.

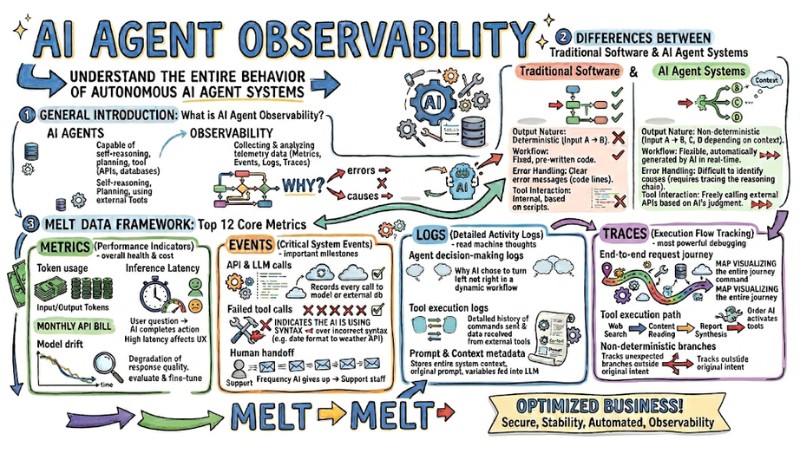

- The MELT Gold Standard: Master a comprehensive data measurement framework including: Metrics (performance/cost), Events (system events), Logs (reasoning logs), and Traces (execution flow tracking).

- The Power of Traces in Debugging: Discover the most critical technique to trace an AI's journey through non-deterministic branches, thereby finding the root cause of every operational error.

- OpenTelemetry (OTel) Strategy: Learn how to use OTel data standardization to build a flexible, vendor-neutral, and easily scalable monitoring system.

- 5-Step Deployment Roadmap: Apply a practical process from defining business goals and standardizing data collection to building feedback loops that make AI smarter.

- FAQ: Decode technical barriers regarding AI ethics, memory management for Multi-agents, and cost optimization through real-time token tracking.

General Introduction to AI Agent Observability

What are AI Agents and AI Agent Observability?

AI Agents are AI applications capable of self-reasoning, planning, and using external tools (APIs, databases) to complete complex goals without step-by-step human intervention.

AI Agent Observability is the process of collecting and analyzing telemetry data to clearly understand the entire behavior of autonomous AI Agent systems. From there, you know how the system is interacting with LLMs (Large Language Models) and external tools.

Pro-tip: Monitoring tells you the system is encountering an error. Observability tells you exactly why the error occurred.

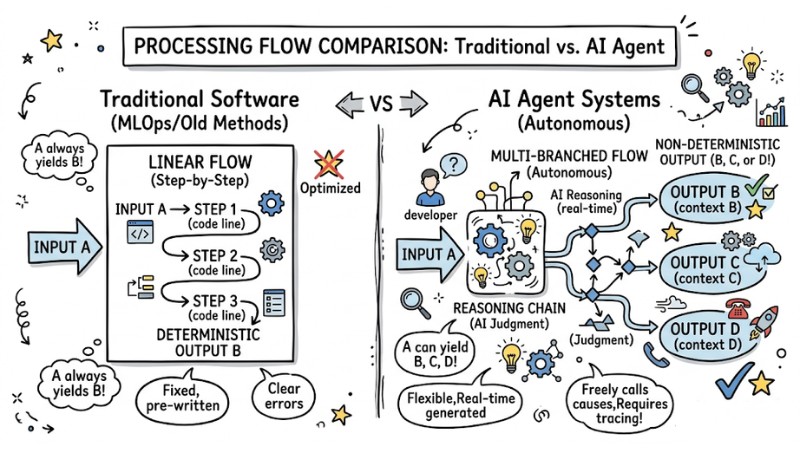

Differences Between Traditional Software and AI Agent Systems

MLOps (Machine Learning Operations) or old distributed system monitoring methods are no longer effective for AI Agents. The cause lies in the nature of the output, specifically:

| Criteria | Traditional Software | AI Agent Systems |

|---|---|---|

| Output Nature | Deterministic (Input A always yields B). | Non-deterministic (Input A can yield B, C, D depending on context). |

| Workflow | Fixed, pre-written by developers. | Flexible, automatically generated by AI in real-time. |

| Error Handling | Clear error messages based on code lines. | Difficult to identify causes (requires tracing the AI's reasoning chain). |

| Tool Interaction | Internal interaction based on pre-set scripts. | Freely calling external APIs based on AI's judgment. |

Comparing the linear processing flow of traditional software and the multi-branched, autonomous processing flow of AI Agents

Why Must Businesses Implement AI Agent Observability?

3 Core Risks When AI Systems Lack Observability

When deploying AI Agents in reality, you are granting decision-making power to a machine. Without observability, you face 3 fatal risks:

- Compliance and Legal Violations: AI Agents often process sensitive data. Without Decision Audit Logs, businesses cannot explain how the AI reached a conclusion to regulatory agencies.

- Irreparable Operational Errors: When an automated process breaks down, the lack of data makes it impossible for technical teams to perform root cause analysis, leading to recurring errors.

- Erosion of Customer Trust: Customers will leave you if the AI unilaterally makes biased decisions, hallucinates, or causes financial damage without a clear explanation mechanism.

Unique Challenges in Multi-agent Systems

In Multi-agent systems (multiple AI agents working together), complexity increases exponentially as AIs must negotiate and delegate work to each other. Without monitoring, one AI might request wrong information from another, creating an infinite loop that crashes the system and depletes API budgets in minutes.

MELT Data Framework: Top 12 Core Metrics for AI Agent Observability

To break the black box, I recommend applying the MELT data framework. This is the gold standard in AI Agent Observability.

Metrics (Performance Indicators)

Metrics help you evaluate the overall health and operational cost of LLM applications.

- Token usage: Measures input/output tokens. This metric directly determines your monthly API bill.

- Inference Latency: The time from when a user asks a question to when the AI completes the action. High latency directly affects the User Experience (UX).

- Model drift: Tracking the degradation of response quality over time will tell you when you need to evaluate and fine-tune the AI.

Events (Critical System Events)

Events record important milestones when the AI attempts to complete a task.

- API & LLM calls: Records every call to a language model or external database.

- Failed tool calls: Extremely important. This event indicates the AI is using the wrong syntax when trying to call a tool (e.g., passing the wrong date format to a weather API).

- Human handoff: The frequency at which the AI gives up and transfers the request to a support staff member.

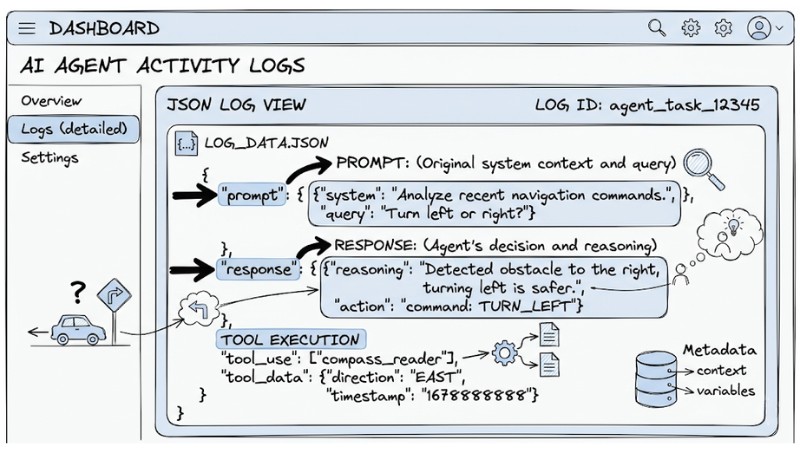

Logs (Detailed Activity Logs)

Logs store text-based reasoning chains, allowing you to read the "thoughts" of the machine.

- Agent decision-making logs: Records why the AI chose to turn left instead of right in a dynamic workflow.

- Tool execution logs: A detailed history of commands sent and data received from external tools.

- Prompt and Context metadata: Stores the entire system context, the original prompt, and variables fed into the LLM at the time the event occurred.

Dashboard showing a JSON Log of an AI Agent

Traces (Execution Flow Tracking)

This is the most powerful solution for debugging non-deterministic execution paths.

- End-to-end request journey: A map visualizing the entire journey from receiving a command to returning results.

- Tool execution path: Shows the order in which the AI activates tools (e.g., Web Search -> Content Reading -> Report Synthesis).

- Non-deterministic branches: Tracks unexpected branches the AI invents outside the developer's original intent.

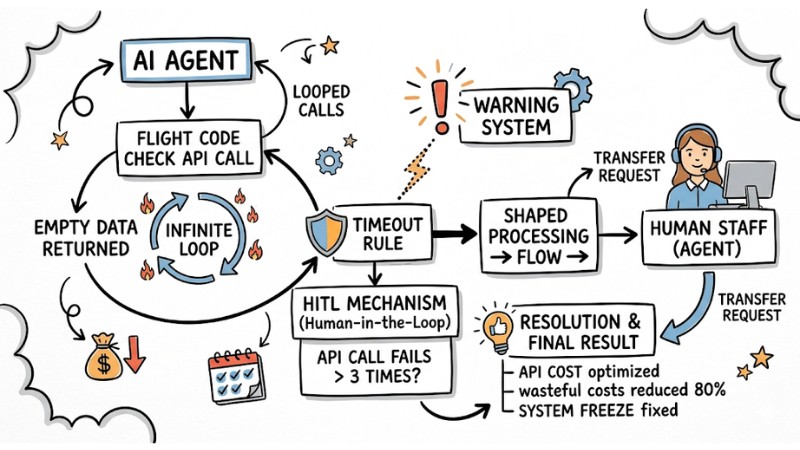

Practical Use Case: Applying AI Agent Observability to Optimize Business

Below is a useful use case when deploying an AI Agent to support flight bookings for customers.

- Scenario: The system suddenly reports a 300% surge in API costs within 1 hour. The AI Agent is not responding to customers but repeatedly reports a "processing" status.

- Action: The developer opens the Observability tool and checks Traces combined with Events, discovering the AI is stuck in an infinite loop. It is repeatedly calling the flight code check API because the partner's API is returning empty data.

- Solution: At this point, the developer adds a Timeout rule to the tool execution path. Simultaneously, a Human-in-the-loop (HITL) mechanism is established. If the API call fails more than 3 times, the AI is forced to stop and transfer the request to a human agent.

- Result: Completely prevents API "money burning," reduces wasteful costs by 80%, and permanently fixes the system freeze experience for customers.

Cost Optimization and Hallucination Control in Customer Service

Standardization and Implementation Tools for AI Agent Observability

Distinguishing Between AI Agent Application and AI Agent Framework

To set up monitoring correctly, you need to clearly distinguish between these two levels:

- AI Agent Framework: These are infrastructure libraries like LangGraph, CrewAI, AutoGen. Monitoring here focuses on the performance of core modules and how the Multi-agent system orchestrates flows.

- AI Agent Application: This is the actual product serving users. Monitoring at this level focuses on accuracy, token costs, and end-user behavior.

The Role of OpenTelemetry in GenAI

OpenTelemetry (OTel) is becoming the common standard led by the GenAI SIG, helping to standardize data collection. You have 2 main implementation methods:

| Method | Advantages | Disadvantages |

|---|---|---|

| Baked-in instrumentation (Built-in Framework) | Easy to set up, framework self-maintains, available immediately with new features. | Heavily burdens the framework, prone to ecosystem lock-in. |

| Instrumentation via OTel library (Independent OTel library) | Completely separates monitoring from the core. Freedom to change measurement tools. | Requires higher technical configuration, potential version compatibility risks. |

Recommendation: I always prioritize using an independent OTel library to ensure long-term system scalability.

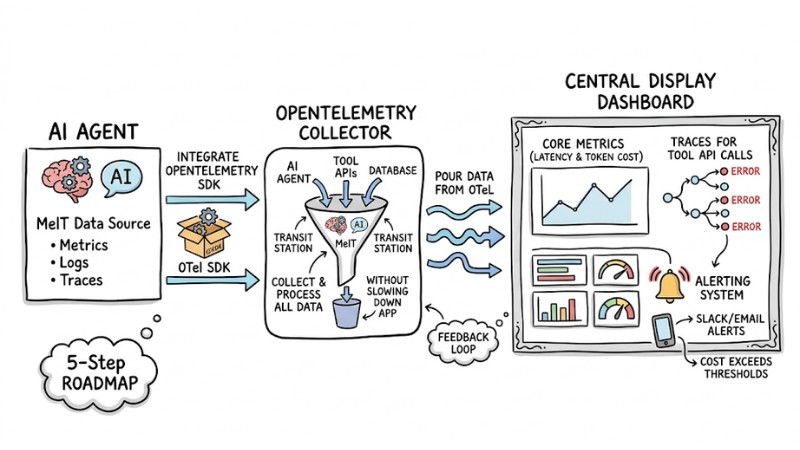

Practical Guide: 5-Step Roadmap for AI Agent Observability Deployment

Instead of trying to track everything from day one, follow these 5 steps to effectively control the AI application lifecycle:

- Define Clear Business Goals: Before writing any monitoring code, decide what you want to optimize: Is it cutting Token usage costs, increasing response speed, or reducing hallucination rates?

- Standardize Data Collection with OpenTelemetry: Integrate the OpenTelemetry SDK into your AI application. Simultaneously, use the OTel Collector as a transit station to collect all MELT data without slowing down the main application.

- Start with Core Metrics (Metrics & Traces): Prioritize measuring inference latency and drawing Traces for tool API calling tasks. This is where 80% of autonomous AI system errors occur.

- Set Up Dashboards and Alerting Systems: Pour data from OTel into visualization tools. Set up automatic alerts via Slack/Email when Token costs exceed thresholds or Human handoff rates spike within 15 minutes.

- Build a Continuous Feedback Loop: Use the collected Logs as input to fine-tune prompts or re-evaluate the LLM. Observability is not just for fixing errors; it is the raw material to make AI smarter.

System architecture describing data flow from AI Agent to Central Display Dashboard

FAQ about AI Agent Observability

Why is AI Agent Observability important for businesses?

AI Agent Observability plays a core role in ensuring AI Ethics and Compliance. This visibility helps businesses control API costs, prevent operational errors, and provide clear explanations for every automated AI decision to customers.

How does Observability capture agent behavior?

Through instrumentation, the system continuously records MELT data in real-time. It captures the entire Prompt context, metadata, and AI interactions with external tools to create a complete event chain.

Can traditional Application Performance Monitoring (APM) tools be used for AI Agents?

Not entirely. Traditional APM is great for measuring CPU or memory, but it's ineffective with the non-deterministic execution paths of AI. You need specialized tools capable of analyzing Agent decision-making logs and tracing LLM reasoning chains.

What is AI Agent Observability?

AI Agent Observability is the monitoring and understanding of AI agent system behavior, including interactions with large language models and external tools. It helps answer critical questions about agent accuracy, efficiency, and compliance.

How to effectively monitor core AI Agent Observability metrics?

Monitor 12 MELT metrics, including token usage, latency, model drift (Metrics); API calls, tool calls, human handoff (Events); user, LLM, decision-making logs (Logs); and end-to-end request journeys (Traces).

What is the difference between traditional software and autonomous AI systems?

Traditional software has deterministic, predictable outputs, while AI Agent systems have non-deterministic outputs, acting as "black boxes" due to being based on LLMs, requiring specialized monitoring methods.

Can traditional Application Performance Monitoring (APM) tools be used for AI Agents?

Not entirely. Traditional APM struggles with the non-deterministic nature of AI Agents. Specialized tools are needed to record decision-making logs and non-deterministic execution paths.

What are the core risks of AI Agents when lacking Observability?

The three main risks are: compliance violations due to lack of audit logs, operational errors difficult to trace back to a root cause, and loss of trust due to inexplicable AI actions or hallucinations.

How to track important system events in an AI Agent?

Monitor events such as API calls, external tool call failures, and cases requiring human intervention (human-in-the-loop). This helps in early detection of bottlenecks.

What do detailed AI Agent activity logs (Logs) include?

They include user interaction logs, LLM exchange logs (prompt, metadata), tool execution logs, and specifically the agent's decision-making process logs.

How does Execution Flow Tracking (Traces) help in debugging AI Agents?

Traces record the entire journey of a request, helping to accurately identify bottlenecks or failure points in an agent's complex workflow.

How to implement AI Agent Observability for businesses?

Implement in 5 steps: Define goals, standardize OpenTelemetry, choose priority MELT metrics, set up dashboards, and build a continuous feedback loop.

What is the role of OpenTelemetry in GenAI?

OpenTelemetry provides semantic conventions for AI telemetry data, helping to unify how data is collected and reported from different agent frameworks and applications.

Read more:

- Guide to Managing AI Agent Permissions to Ensure System Security

- Detailed Guide to Deploying a Multi-Agent System in OpenClaw

- MCP Server Best Practices: Optimizing Performance for AI Agents

Implementing AI Agent Observability is not just a technical task; it's the highest level of risk management strategy. By adopting MELT and OpenTelemetry standards, you're transforming a dangerous "black box" machine into a transparent, secure, and cost-effective autonomous system. Start reassessing your monitoring architecture and configuring OTel today to take true control of your enterprise's AI system.

Tags