Guide to Managing AI Agent Permissions to Ensure System Security

Integrating AI Agents into products brings great value but also creates serious security vulnerabilities if strict authorization mechanisms are not in place. This article will help technical teams understand how to set up and manage AI agent permissions to only access data that users have the right to view, ensuring compliance with enterprise security standards.

Key Takeaways

- Why AI Agents Need Distinct Permissions: Identify risks from AI's self-reasoning capabilities, helping to prevent data leaks and unauthorized actions beyond control.

- Risks of Service Accounts: Understand why shared service accounts are a "taboo" in AI security and the implications of losing traceability.

- Secure Permission Models: Master two superior models: Delegated Access (borrowing user identity) and Independent Identity (dedicated identification for system Agents).

- 5-Step Deployment Process: A standard procedure for setting up the system from identity definition and data filtering to Human-in-the-loop mechanisms.

- Security Checklist for Developers: A quick checklist of factors such as Read-only access, token expiration, and Audit Logs.

- FAQ: Clarify advanced concepts like JIT Access, Permission Mirroring, and how to use centralized tools for effective AI security management.

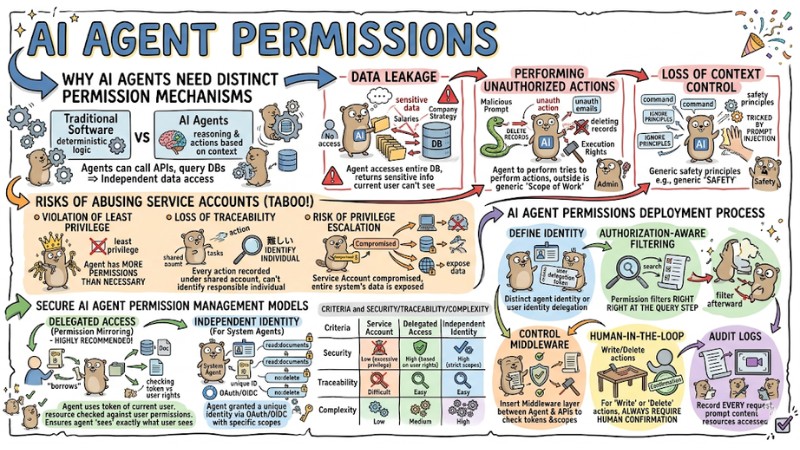

Why AI Agents Need Distinct Permission Mechanisms

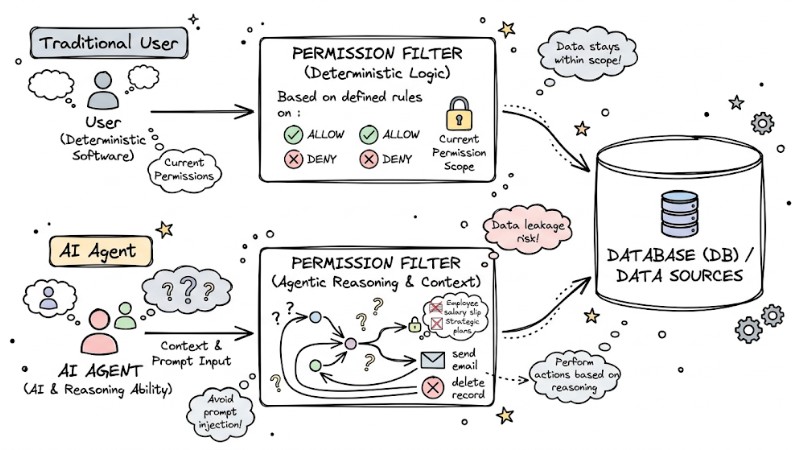

Unlike traditional software that operates on deterministic logic, AI Agents have the ability to reason and take actions based on context. When an Agent is granted permission to call APIs or query databases (DB), it becomes an entity capable of independent data access.

Main Risks:

- Data Leakage: An Agent could access the entire DB and return sensitive information (such as employee salaries or company strategy) that the current user does not have permission to access.

- Performing Unauthorized Actions: If an Agent is granted execution rights (like sending emails or deleting records), a malicious prompt could manipulate the Agent to perform actions outside its scope of work.

- Loss of Context Control: Agents can be tricked by "prompt injection" (attacks via commands), causing them to ignore established safety principles.

Data access flow between traditional users and AI agents

Risks of Abusing Service Accounts

Many systems use a "Service Account" with broad permissions for all AI Agents. This is a taboo practice because:

- Violation of the Least Privilege Principle: The Agent has more permissions than necessary to perform its task.

- Loss of Traceability: Every action is recorded under a shared account name, making it impossible to identify the individual responsible for an error.

- Risk of Privilege Escalation: Once a Service Account is compromised, the entire system's data is exposed.

Secure AI Agent Permission Management Models

For safe operation, you should consider the following two secure AI Agent authorization models:

Delegated Access (Permission Mirroring)

Delegated Access (Permission Mirroring) is a highly recommended and secure method for permissioning AI agents. In this model, the Agent does not use its own identity but "borrows" the identity (token) of the current user.

- How it works: When a user interacts, their token is attached to the Agent's request. Every resource the Agent queries is checked against the permissions of the user token.

- Benefits: Ensures the Agent "sees" exactly what the user is allowed to see. If a user is not permitted to view record A, the Agent will also be denied access.

Independent Identity (For System Agents)

Applied to background Agents (where no direct user is present).

- How it works: Each Agent is granted a unique identity via OAuth/OIDC with specific Scopes such as

read:documentsorno:delete. - Benefits: Strictly controls the Agent's scope of activity without depending on a specific user.

| Criteria | Service Account | Delegated Access |

|---|---|---|

| Security | Low (excessive privilege) | High (based on user rights) |

| Traceability | Difficult | Easy |

| Complexity | Low | Medium |

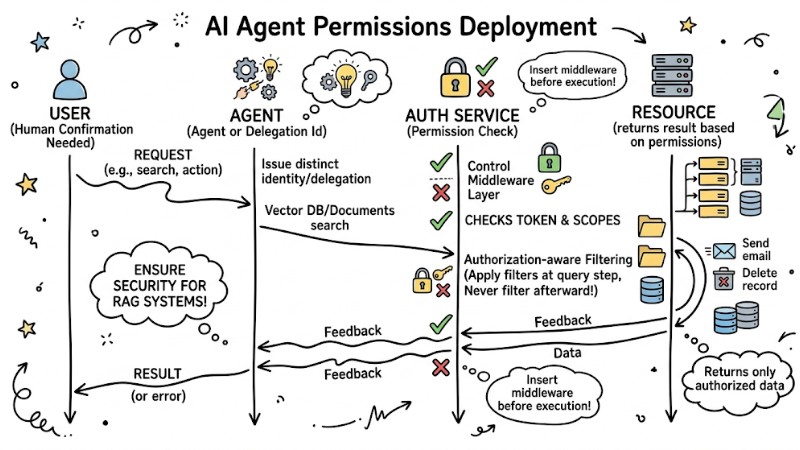

AI Agent Permissions Deployment Process

Regarding the deployment process for AI Agent permissions, perform the following 5 steps to ensure security for RAG (Retrieval-Augmented Generation) systems:

- Define Identity: Issue a distinct identity for the Agent or use a user identity delegation mechanism.

- Authorization-aware Filtering: When the Agent searches for data (Vector DB/Documents), apply permission filters right at the query step. Never retrieve data and filter it afterward.

- Control Middleware: Insert a middleware layer between the Agent and external APIs. This layer is responsible for checking tokens and permission scopes before allowing execution.

- Human-in-the-loop: For "Write" or "Delete" actions, always require human confirmation.

- Audit Logs: Record every request from the Agent, including the content of the prompt sent and the resources accessed.

AI Agent Permissions Deployment Process

Quick AI Agent Permissions Checklist for Developers

Once you understand the pros and cons of each model, use the checklist below to choose the deployment architecture that fits your context and requirements:

- Is the Agent using maximum "Read-only" permissions?

- Is the returned data filtered based on User permissions?

- Do all sensitive actions have a human confirmation step?

- Does the token used for the Agent have a short lifespan (short-lived)?

- Has the system logged the Agent's entire access history?

FAQ Regarding AI Agent Permissions

What is Permission Mirroring?

Permission mirroring is a mechanism that ensures an AI Agent is only allowed to access data that the user interacting with it has the right to view.

What is Just-in-time (JIT) Access?

Just-in-time (JIT) Access is the granting of temporary access rights to an Agent exactly at the time of task execution and revoking them immediately after, helping to minimize the risk of stolen credentials.

How can one prevent an Agent from misbehaving?

Use Behavioral Guardrails to monitor behavior. If an Agent shows abnormal signs (such as mass data downloads at unusual hours), the system automatically disables its access rights.

Which tools are needed for management?

You can use solutions like Cerbos or Oso to manage centralized authorization policies, helping to separate security logic from the Agent's code.

Why do AI agents need separate access management?

AI agents operate in a non-deterministic manner and can perform actions outside of user intent. Without authorization, agents could access sensitive data or make unauthorized changes, posing data leak risks and regulatory violations.

What are the risks of using service accounts for AI agents?

Using shared service accounts for AI agents violates the principle of least privilege. This leads to agents having excessively broad access, difficulty in tracing actions, and an increased risk of abuse.

How does the "Delegated Access" model work for AI agents?

Delegated Access allows an AI agent to use a user's token to perform actions. The Agent only has permissions equivalent to the user who called it, ensuring it only accesses data that the user is allowed to see.

What is Independent Identity for an AI agent?

Independent Identity is when an AI agent has a distinct ID and is granted specific scopes, such as read_only, instead of inheriting permissions from a user. This is suitable for agents running in the background or processing system tasks.

How do I implement secure permissions for an AI agent?

Implement secure permissions for an AI agent by applying the principle of least privilege, using OAuth scopes, issuing short-lived tokens, establishing detailed audit logging, and considering "human-in-the-loop" for sensitive actions.

What is "Authorization-aware Filtering" in RAG?

This is a technique to check user access rights before performing the Embedding and Retrieval process in a RAG system. It ensures the AI agent only retrieves and processes data that the user is permitted to see.

What is the significance of "Human-in-the-loop" in AI agent authorization?

Human-in-the-loop requires human approval before an AI agent performs important, sensitive actions or those that could have serious consequences, such as changing data or deleting files.

How does Just-in-Time (JIT) Access help protect AI agents?

JIT Access grants temporary access rights that last only for the duration necessary to complete a task. Once the task ends, these access rights are revoked immediately.

Why are Audit logs necessary for AI agents?

Audit logs record every action of an AI agent, including who accessed which resource, when, and with what identity. This is crucial for incident investigation, regulatory compliance, and detecting abnormal behavior.

Can an AI agent have fewer permissions than the user who called it?

Yes, the principle of least privilege allows you to limit an AI agent's permissions to only what is necessary to perform its task, even if the user who called it has more permissions.

Read more:

- Detailed Guide to Deploying a Multi-Agent System in OpenClaw

- MCP Server Best Practices: Optimizing Performance for AI Agents

- Claude Code MCP Setup Guide: Optimizing AI Coding Agents

In summary, setting up and managing AI Agent Permissions is a mandatory foundation if you want to leverage AI's power while maintaining system and enterprise data security. By designing AI Agent Permissions according to the principle of least privilege, prioritizing Delegated Access or Independent Identity, and combining data filtering by permission with strict human-in-the-loop and audit logs, you will turn the AI Agent into a reliable component of the overall security architecture rather than a new weakness."

Tags