AI Agent Secrets Management: 7 Optimal Security Solutions

API key leakage is one of the most serious causes of data breaches, system takeovers, and heavy financial and reputational damage, rather than just a minor security glitch. By granting autonomy to AI, you are creating a fatal vulnerability if you still rely on manual password management methods. In this article, I will guide you through 7 practical AI Agent Secrets Management strategies to absolutely protect the "technical accounts" that AI agents and systems use to access APIs, data, and services.

Key Takeaways

- Redefining Non-human Security: Understand that AI Agent Secrets Management is the process of protecting "technical accounts" (machine-to-machine) - the most critical link in preventing large-scale data leaks.

- Identifying Risks from Static Keys: Explain why

.envfiles and manual management methods are "death traps" that make systems vulnerable to attacks via source code repositories (CI/CD). - 7 Practical Security Strategies: Equip yourself with a roadmap from applying Dynamic Credentials and Proxy Key architecture to Tokenization and Machine Identity management.

- Standard Proxy Key Architecture: Understand the secure workflow where the Agent never directly contacts the root key, helping to isolate risks and enhance control.

- Choosing the Right Platform: Compare HashiCorp Vault, Akeyless, and Onboardbase to find the optimal secrets management solution for enterprise or startup scales.

- FAQ: Clarify how to apply Zero Trust to AI, balance security and latency, and the vital difference between dynamic and static keys in the Generative AI era.

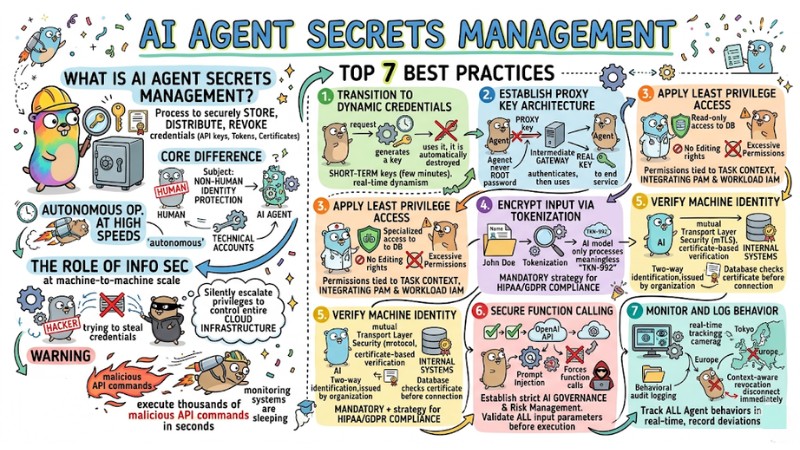

What is AI Agent Secrets Management?

Basic Definition of Secrets Management for AI

AI Agent Secrets Management is the process of securely storing, distributing, and revoking credentials such as API keys, tokens, and certificates specifically for artificial intelligence. The core difference lies in the subject: this system provides non-human identity protection - the access accounts/keys that systems and AI Agents use to connect to services, rather than human users.

These systems operate autonomously at high speeds, distinct from traditional user accounts. Therefore, protecting these "technical accounts" is the core of credential security for AI agents.

The Role of Information Security in AI Agent Systems

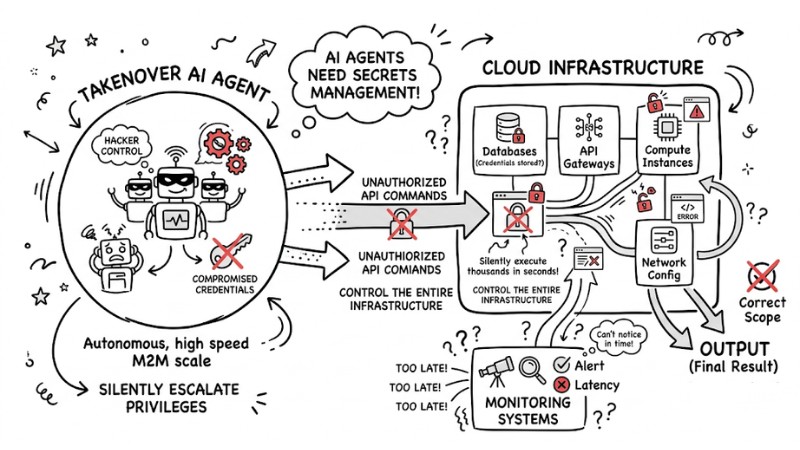

AI Agents possess extremely high autonomy and continuously perform machine-to-machine communication at a machine-to-machine scale. If a hacker compromises credentials from an Agent, they can silently escalate privileges to control the entire cloud infrastructure.

Warning: A compromised AI Agent with High autonomy can execute thousands of malicious API commands in seconds before monitoring systems can even notice.

The AI agent was compromised, affecting the cloud infrastructure

Why Traditional Secrets Management is No Longer Suitable

Risks from Static Keys and .env Files

Managing static secrets using .env files or hardcoding them directly into source code is an extremely dangerous method. A small oversight when committing code to GitHub or storing it in automated pipelines (Automated Pipeline Security (CI/CD)) can expose your entire OpenAI API key to attackers. Static keys lack the ability to self-rotate or expire, making them easy targets for hackers.

Practical Advice: You must absolutely not store .env files containing real keys on source code repositories, including private repos. Instead, use dedicated environment variable management tools.

Limitations of Traditional Vaults Against AI Flexibility

Old Vault systems were designed for static servers and cannot keep up with the flexible speed of AI. The biggest drawbacks are high latency and a lack of Just-in-time (JIT) provisioning capabilities.

In contrast, AI Agents need to make decisions based on Context-aware authentication within milliseconds. If forced to wait for bulky authentication processes, the entire automation flow will become congested.

Top 7 Best Practices for AI Agent Secrets Management

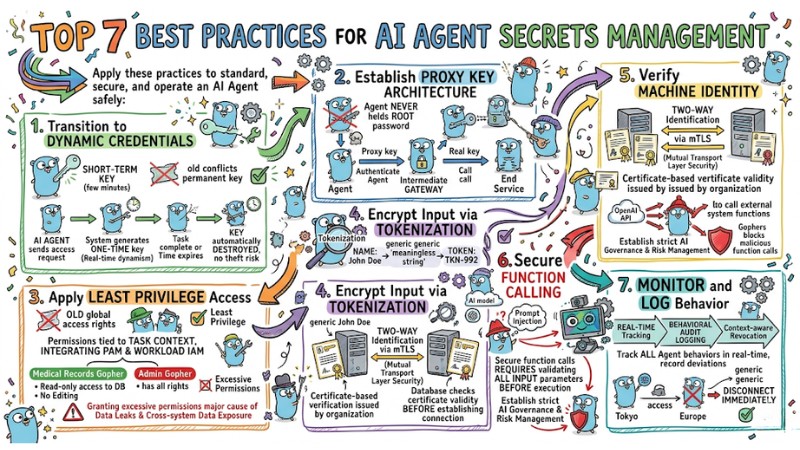

1. Transition to Dynamic Credentials

Instead of granting a permanent API key, use Dynamic credentials. These are short-term keys that only exist for a few minutes. Specifically:

- The AI Agent sends an access request to the target service.

- The system generates a one-time authentication key (Real-time credential dynamism).

- Once the Agent completes the task (or time expires), the key is automatically destroyed, eliminating theft risk.

2. Establish Proxy Key Architecture

In Proxy key architecture for AI automation, the Agent never communicates directly with the end service. You should set up an intermediate Gateway so the Agent uses a "Proxy key" when calling the Gateway. The Gateway authenticates the Agent, then exchanges it for the real key to send the request. This ensures the Agent never holds the root password.

3. Apply Least Privilege Access

You must not grant global access rights to an AI. Instead, apply the principle of Least Privilege Access by integrating Privileged Access Management (PAM) and Workload IAM. Permissions must be tied to task context. For example: An AI Agent specialized in summarizing medical records should only be granted Read-only access to the database, with no editing rights.

Warning: Granting excessive permissions to AI agents is one of the major causes of data leaks and cross-system data exposure.

4. Encrypt Input via Tokenization

Before feeding data into an AI model for analysis, all sensitive information must be encrypted via Tokenization. For example: The name "John Doe" becomes "TKN-992". The AI only processes these meaningless token strings. This is a mandatory strategy to ensure HIPAA/GDPR compliance.

5. Verify Machine Identity

Communication between AI and internal systems needs two-way identification via the mTLS (Mutual Transport Layer Security) protocol. Machine Identity Management uses certificate-based verification issued by the organization. When an Agent calls a database, the database checks the certificate's validity before establishing a connection.

6. Secure Function Calling

When using the OpenAI API to trigger Function Calling, AI can call external system functions. You must establish strict AI Governance and Risk Management because attackers can use Prompt Injection to force the AI to call functions and leak data. Securing function calls requires validating all input parameters before execution.

7. Monitor and Log Behavior

The system needs to track all AI Agent behaviors in real-time. Any deviation from standard activity patterns must be recorded through Behavioral audit logging.

Real-world scenario: If an Agent always accesses from a server IP in Tokyo but suddenly a request comes from Europe, the system should trigger Context-aware revocation and disconnect immediately.

Top 7 Best Practices for AI Agent Secrets Management

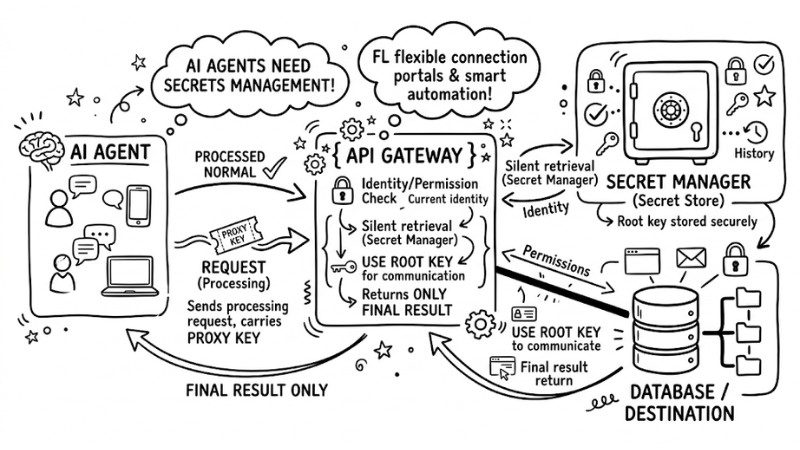

How Proxy Key Architecture Works in Practice

Standard Workflow from AI Agent to Secret Store

AI Agent] --(Proxy Key)--> [API Gateway] --(Validate)--> [Secret Manager]

|

(Fetch Root Key)

V

[Database/SaaS]

The standard technical flow via Proxy Architecture to Secret Manager:

- The AI Agent sends a processing request, carrying a Proxy Key, to the API Gateway.

- The Gateway checks the Agent's current identity and permissions.

- If valid, the Gateway silently retrieves the Root Key from the Secret Store.

- The Gateway uses the Root Key to communicate with the destination and returns only the final result to the Agent.

Standard workflow from AI Agent to Secret Store

Advantages in Scaling (Multi-cloud AI Orchestration)

When deploying distributed systems across AWS, Azure, and GCP, Proxy Key architecture unifies identity management. You don't need to manually configure API keys for each cloud. This architecture supports Multi-cloud AI orchestration, synchronizing workflows into a single Enterprise Identity and Access Management (IAM) system.

Popular Tools and Security Ecosystems

| Tool | Pros | Cons | Best For |

|---|---|---|---|

| HashiCorp Vault | Industry standard, robust security, diverse plugin ecosystem. | Complex configuration, high overhead, latency at dynamic scale. | Large Enterprises with dedicated DevOps teams. |

| Akeyless | SaaS-based solution (Vaultless), excellent virtual machine identity integration. | Dependent on internet connection and provider platform stability. | Teams prioritizing Cloud-native solutions without managing physical infra. |

| Onboardbase | Easy setup, fast secure environment deployment, native Proxy Key support. | Integration ecosystem not yet as rich as legacy platforms. | Startups and agile AI teams needing fast deployment speed. |

FAQ

How does Zero Trust Architecture apply to AI Agents?

It means trusting no Agent by default, even those running within the internal network. Every access request must undergo continuous identity authentication, context checks (IP, time, workflow), and full data encryption before approval.

Can I continue using environment variables (.env) during the Test phase?

You can use .env in Local environments for fast development. However, you must absolutely remove them when deploying to Production. Switch to automatic environment injection via Secret Managers to avoid leaving traces in server logs.

How do I balance security and AI Agent performance (latency)?

To reduce latency from continuous Secret Manager calls, implement local Caching (storing temporary keys in RAM) encrypted by KMS. Additionally, place the Vault in the same internal network (VPC) as the AI Agent to optimize response times.

AI Agent Secrets Management is not merely about hiding character strings; it is about building a flexible Cybersecurity Framework: dynamic identity, instant provisioning, and automated revocation. It is time to re-evaluate your current CI/CD systems and eliminate static keys before an AI Agent accidentally opens the door to your infrastructure for attackers!

Tags